HOME > Research Activities > Research Updates >

The term “virtual reality” is heard frequently. At the National Institute for Fusion Science (NIFS) is an immersive virtual reality device called the CompleXcope. This device has screens in four directions (front, left, right, down) each of which is three meters in height by three meters in width. By projecting data into the enclosed space by screens, inside that space one can see and feel as if there is a virtual matter that does not exist in reality. Using this device, at NIFS we are conducting analyses and research on complicated plasma phenomena by visualizing simulation data of plasma and fusion, and experimental data from LHD. Here, we will introduce recent research that utilizes the CompleXcope.

At NIFS, in order to realize the future fusion power station, we are also advancing with design research for the “Helical-type demonstration reactor (DEMO).” The DEMO is for the purpose of demonstrating the generation of fusion energy, and numerous devices are to be attached and the reactor is envisaged to have a very complicated structure.

In the design of the helical-type DEMO, we must solve numerous issues. (See back number 225.) The process for construction and maintenance procedures from the beginning of operation also are important issues. For example, when we are to remove parts from inside the reactor, considering in advance whether or not they interfere with (strike) another part, we must decide upon the location of parts, the process of construction, and the maintenance procedures. Further, it is necessary to consider the attachment and the replacement of parts, the design of the robot arm for use in moving objects, and the order in which parts will be moved. Until now, using design software we based our design upon information shown on a two-dimensional display of a usual computer. However, by this method, because of projection of three-dimensional information in a two-dimensional format, information in depth is lost. Thus, it was extremely difficult to reflect the result, obtained by considering the parts and the movement of the robot arm, in the design. And new system development was required in order to resolve this problem.

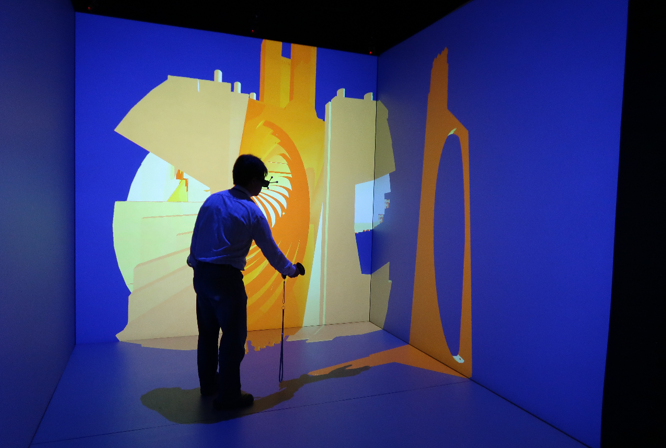

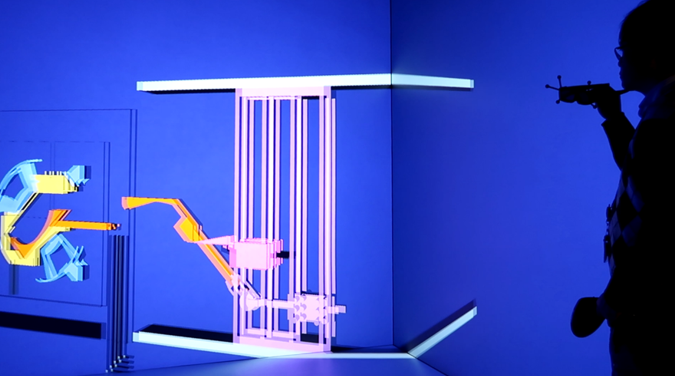

Thus, at NIFS, using CompleXcope, we have constructed a new system that can confirm in three-dimension the movement of a robot arm and the position relation of reactor parts of the helical DEMO. Using this system, first, the design data of the DEMO is projected in the virtual space, then by walking inside the DEMO and by changing the line of sight one could confirm the position relation of parts from various directions. Next, data including the robot arm are projected, and one can confirm the attachment and the removal of parts by the robot arm. Further, by projecting one’s own “hand” into the virtual space, one can learn to hold the part and move it about. From these movements, we became able to confirm the movement of robot arm and parts standing in the center or at the edge of the DEMO. As a result, will parts interfere with other parts? Will the movement of the robot arm and the maintenance procedures be appropriate? We have become able to effectively examine issues inside three-dimensional virtual space.

In this way, virtual reality devices that are being employed at NIFS are expected to be used in other fields, as well, by utilizing interactivity (this means that data projected into the virtual space change by matching one’s own movement and that the means of expressing data can be changed through controller) and the feeling of immersion (the feeling of entering into the virtual space). Research is advancing in various fields, for example, aiding system in medical education by visualizing MRI images and by determining surgical procedures, rehabilitation systems for walking training conducted inside virtual space, and systems for improving techniques by visualizing sports movements and by assisting in analyzing movement. In the future, there will be still further cooperation with researchers in various fields, and further advances in visualization research that utilizes virtual reality devices. In this way, we will contribute to the realization of fusion energy.

Image 1: A photograph showing projection of design data from the DEMO in the virtual space using CompleXcope. One can walk inside the DEMO, and change the perspectives through which one looks. Further, using one’s “hand,” one can hold and move parts.

Image 2: A photograph showing projection of some design data of the DEMO and the robot arm in virtual space. The part (in orange) is carried by the robot’s arm (in pink), and the movement is confirmed.